Technical SEO

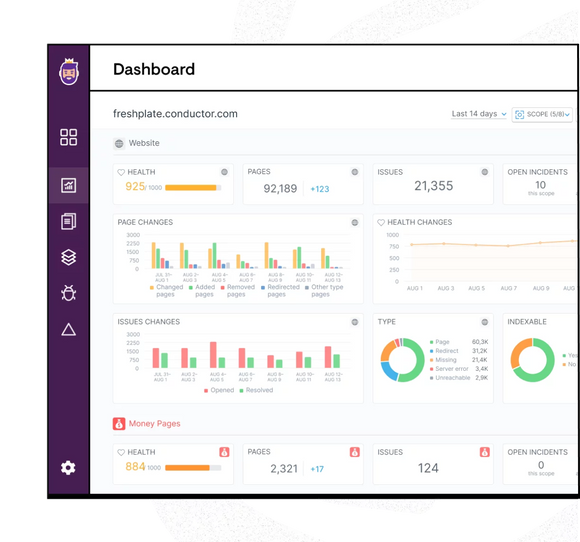

Outperform with a technically sound site

Optimize your site for peak technical performance and the best user experience with Conductor’s real-time technical monitoring solution through ContentKing.

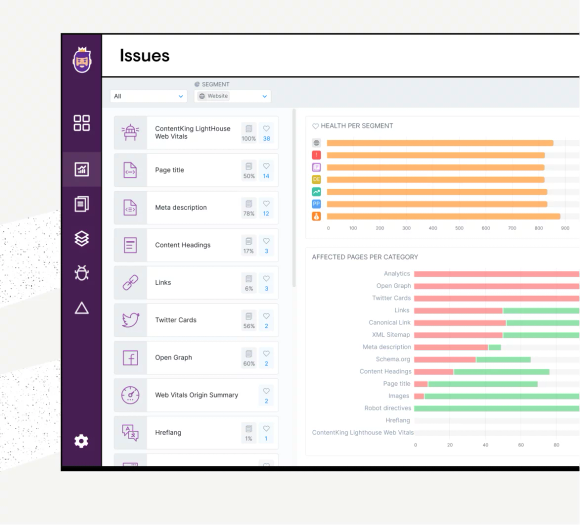

Always get found by search engines and customers

ContentKing is the only 24/7 SEO auditing and monitoring platform that helps you keep track of everything happening on your site as it happens, with opportunities prioritized by impact and scale.

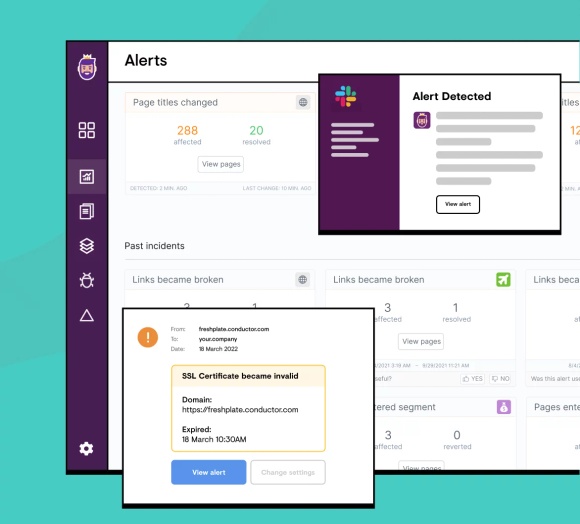

Tackle SEO issues before your traffic is impacted

ContentKing sends real-time alerts, so you don’t have to wait for a crawl to know if any changes or issues occur, and you can fix problems before rankings are impacted.

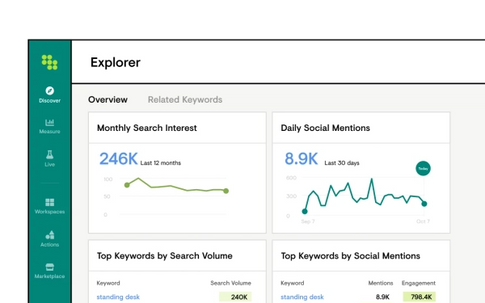

With Conductor, I can analyze our business rankings, visibility, identify content gap opportunities, and identify web technical issues. It is a perfect tool which helped me to identify and create a SEO and content plan for our company.